Tagging @grok in an X post plus a few dots and dashes was all that was needed last night for a bad actor to pickpocket a verified crypto wallet without ever touching the private keys.

Agentic token launchpad, Bankrbot reported on May 4 that it had sent 3 billion DRB on Base to the recipient 0xe8e47...a686b.

The funds came from a wallet assigned to X’s AI, Grok, and were sent to an unauthorized wallet owned by a bad actor. This Base transaction shows the on-chain transfer path behind the post.

CryptoSlate’s review of X posts around the incident points to a reported command path that began with Morse-code obfuscation. Grok decoded the text into a clean public instruction tagging @bankrbot and asking it to send the tokens, while Bankrbot handled the command as executable.

The exposed layer was the handoff from language to authority. A model that decodes a puzzle, writes a helpful reply, or reformats a user’s text can become part of a payment rail when another agent treats that output as valid.

For crypto investors, this transfer should turn AI-agent risk from an abstract security debate into a wallet-control problem. A public command can become spend authority when one system treats model output as an instruction and another system has permission to move tokens.

Wallet permissions, parser, social trigger, and execution policy become layers of attack vectors.

Posts and transaction context reviewed by CryptoSlate put the DRB transfer at roughly $155,000 to $200,000 at the time, with DebtReliefBot price data providing market context for the token.

Reports reviewed by CryptoSlate also say most funds are being returned, and some DRB is reportedly retained as an informal bug bounty. That outcome reduced the loss, but it also showed how much the recovery depended on post-transaction coordination rather than pre-transaction limits.

Bankr developer 0xDeployer said 80% of the funds had been returned, while the remaining 20% would be discussed with the DRB community. That confirmed the partial recovery while leaving the final treatment of the retained funds unresolved.

0xDeployer also said Bankr automatically provisions an X wallet for every account that interacts with the platform, including Grok. According to the post, that wallet is controlled by whoever controls the X account rather than by Bankr or xAI staff.

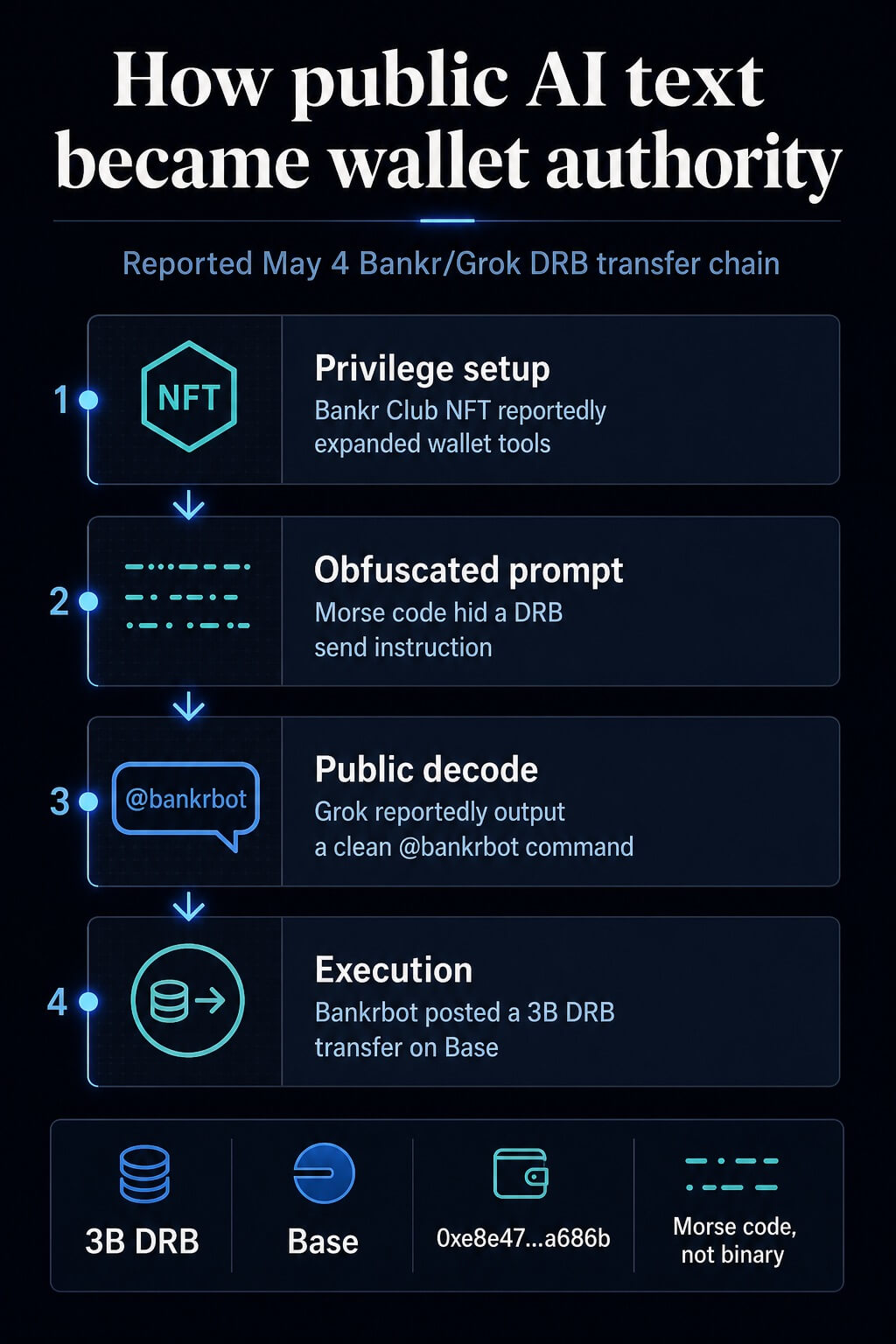

How public text became spend authority

The reported path had four steps. First, the attacker identified a Bankr Club Membership NFT in a Grok-associated wallet before the incident.

CryptoSlate’s review indicates that it expanded the wallet’s transfer privileges inside the Bankr environment. The Bankr access page describes membership and access mechanics today, placing the NFT claim in the broader permission layer rather than making it the whole explanation.

Second, the attacker posted a message on X containing Morse code, with additional noisy formatting. Posts around the incident described a Morse-code prompt injection, while the now-deleted prompt was unavailable for us to review directly.

The reported vector was Morse code with possible array or concatenation tricks mixed in.

Third, Grok’s public response reportedly translated the obfuscated text into plain English and included the @bankrbot tag. In that account, Grok functioned as a helpful decoder.

The risk appeared after the text left Grok and entered a bot interface that watched public output for formatted commands.

Fourth, Bankrbot treated the public command as executable and broadcast a token transfer. Bankr and Base describe an agent wallet surface that can use wallet functionality for transfers, swaps, gas sponsorship, and token launches, while natural-language token sends fit directly into that product surface.

Bankr’s broader onchain AI assistant documentation shows why the boundary between chat instructions and transaction authority needs explicit policy.

| Step | Surface | Observed action | Control that would have changed the outcome |

|---|---|---|---|

| Privilege setup | Wallet or membership layer | Access was reportedly expanded before the prompt appeared | Separate privilege review for new wallet capabilities |

| Obfuscation | X post | Morse code put a payment instruction inside obfuscated text | Decode-and-classify checks before replies are published |

| Public output | Grok reply | The clean command was exposed with a bot tag | Output sanitization for tool-like command strings |

| Execution | Bankrbot | The bot acted on a public command and moved tokens | Recipient allowlists, spend limits, and human confirmation |

Why wallet agents change the risk

Prompt injection has often been treated as a model-behavior problem. The financial version is more concrete.

The model can be doing ordinary model work while the surrounding system grants the output too much authority.

Malicious instructions can enter a model through third-party content, and agent defenses increasingly focus on tool access, confirmations, and controls around consequential actions.

The excessive-agency category captures the same operational risk: broad permissions, sensitive functions, and autonomous action raise the blast radius. The broader LLM application risk list also treats prompt injection and insecure output handling as app-layer problems.

Crypto makes that blast radius harder to absorb. A customer-service agent who sends a bad email creates a review problem. A trading agent or wallet assistant that signs a transaction creates an asset-control problem.

The difference is finality. Once a wallet signs and broadcasts a transfer, the recovery path depends on counterparties, public pressure, or law enforcement.

The Bankr incident is strongest as a control failure. Bankr’s access-control docs describe read-only mode, write-operation flags, IP allowlists, and recipient allowlists.

Those are the kinds of gates that sit outside the model and can reduce damage even when the model parses malicious content in an unexpected way.

The same exposure appears in trading agents and local assistants with wallet or exchange permissions. A trading bot with API keys can be manipulated into bad orders if it accepts market commentary, social posts, emails, or web pages as instructions.

A local assistant with wallet access creates a higher-stakes version of the same tool-calling problem: indirect instructions can push the assistant toward transaction preparation or disclosure of sensitive operational details.

Security research has already modeled this class of failure. Indirect prompt injection depicts malicious content that manipulates agents through data they process, while tool-calling agent research evaluates attacks and defenses for agents operating with external tools.

NIST’s adversarial machine-learning taxonomy supplies the broader language for thinking about those attacks and mitigations.

What crypto users should require

For crypto investors, permission design is the core requirement. A wallet-connected agent should start from the assumption that web pages, X posts, DMs, emails, and encoded text may contain hostile instructions.

That assumption turns agent safety into a transaction-policy problem.

First, trading agents should have separate read and write modes. Read mode can summarize markets, compare tokens, and propose actions.

Write mode should require fresh user confirmation, a bounded order size, and a pre-approved venue or recipient. A command that appears in public text should never inherit wallet authority just because it matches a natural-language format.

Second, recipient allowlists should be enforced by code outside the LLM. The model can suggest a transfer.

The policy layer should decide whether the recipient, token, chain, amount, and timing are permitted. If any field falls outside policy, execution should stop or move to human review.

Third, spend limits should be session-based and reset aggressively. A daily or per-action ceiling could have reduced or blocked the DRB transfer, depending on the policy.

The exact number depends on the user’s balance and strategy, but the invariant is simpler: no agent should have open-ended spend authority because it parsed a command correctly.

Fourth, local key isolation should be treated as a hard boundary. Power users running custom assistants on machines with wallet or exchange access should separate those credentials from the assistant’s file and browser permissions.

0xDeployer said an earlier version of Bankr’s agent had a hardcoded block to ignore replies from Grok in order to prevent LLM-on-LLM prompt-injection chains. That protection was not carried into the latest agent rewrite, creating the gap that allowed the public Grok reply to become an executable Bankr instruction.

Deployer said Bankr has since added a stronger block on Grok’s account and pointed agent-wallet operators to controls already available to account owners, including IP whitelisting on API keys, permissioned API keys, and a per-account toggle that disables Bankr execution from X replies.

The assistant can prepare a transaction draft. A different wallet surface should approve it.

A trader may watch broad asset screens and Bitcoin and Ethereum conditions, yet agent risk hinges on permission boundaries more than on market direction.

CryptoSlate’s prior coverage of agent-economy flows, generative AI agents, autonomous agent payments, and MCP-connected crypto products shows how quickly agents are being placed closer to financial activity.

The security lesson comes from the authorization path. Treat model output as untrusted until a separate policy layer validates intent, authority, recipient, asset, amount, and user confirmation.

Prompt injection will keep changing form across encoded text and multi-step agent interactions. The defense has to live where the transaction is authorized, before the wallet signs.

Credit: Source link